-

Bug

-

Resolution: Unresolved

-

Minor

-

Jenkins v2.73.3

ec2 1.38

job-restrictions 0.6

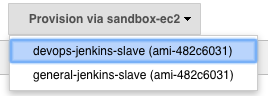

We have ephemeral nodes created by the EC2 plugin when needed by jobs. Since some jobs require elevated AWS permissions, we have created 2 cloud configurations ('general' and 'devops') with the latter having permissions to carry out actions such as create AMI etc.

The problem we are trying to solve is that any of the other users can tell their job to use these nodes. The aim is to restrict the jobs that can be run on this node by using the job restrictions plugin to only allow jobs in a particular directory. The issue we are facing is that there doesn't appear to be a way to easily integrate the EC2 and Job Restrictions plugins with each other such that the necessary config is applied to the node as it is spun up.

The workaround is currently an init script (on the node) which utilises jenkins-cli.jar to manipulate the node's config on the master. This works perfectly when the node is spun up manually via the configure nodes page (as seen below), but does not when a triggered job causes the plugin to bring up a new node.

It has been observed (by monitoring the node's config, and by calling get-node via the cli) that the config does get applied in both scenarios, but when a job is the cause of a new node, the configuration reverts to the original a few seconds after the slave has been successfully provisioned.

This happens with any properties in the <nodeProperties> block, not just job restrictions. We can't see any reason why the plugin should spin up nodes differently whether it was manual or triggered by a job.